PACT 2007 Tutorials & Workshops

The tutorials and workshops for PACT 2007 are listed below.

Registration for tutorials and workshops is handled through the PACT 2007 registration site.

Both half day and full day tutorials and workshops include lunch and breaks.

For workshop inquiries, please contact the Workshops Chair, Guang Gao

(ggao [at] capsl.udel.edu).

For tutorial inquiries, please contact the Tutorials Chair,

Avi Mendelson

(avi.mendelson [at] intel.com).

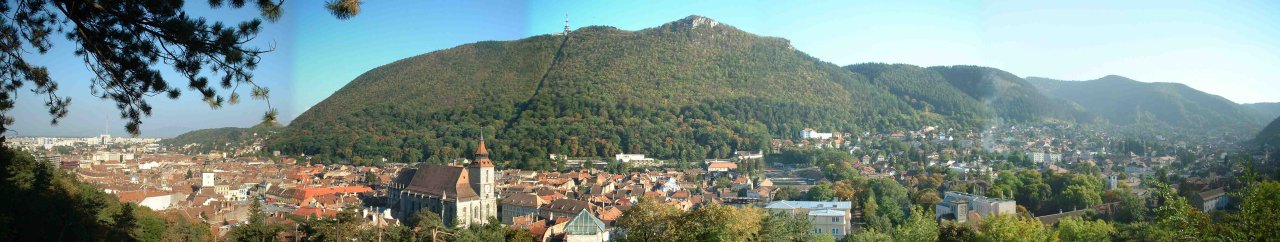

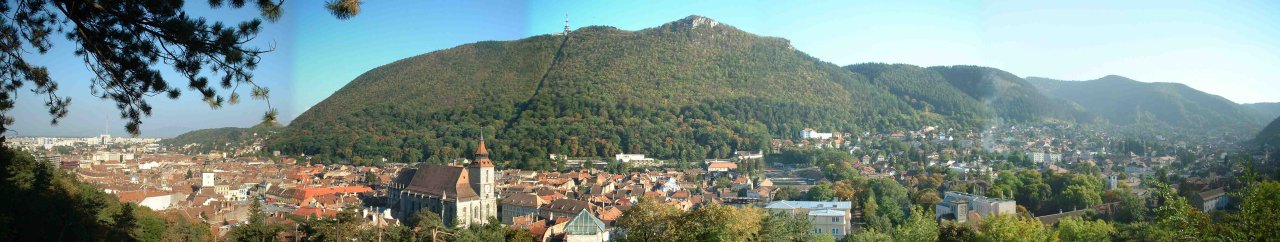

The tutorials and workshops will be held

on Saturday September 15 and Sunday 16

in the Aula of the

University Transilvania of Brasov.

(See the local information page

for details and directions.)

The lunches will be at the ARO Palace Hotel, and the

breaks will be at the Aula.

The daily schedule for the tutorials and workshops is as follows:

- 08:30-12:00: Morning Session

- 10:00-10:30: Morning Break

- 12:00-13:45: Lunch at the ARO Hotel (included with registration)

- 13:45-17:15: Afternoon Session

- 15:15-15:45: Afternoon Break

|

PACT 2007 Tutorials & Workshops - Sunday, September 16, 2007

|

Tutorials Track

(Aula) |

Tutorials Track

(Aula) |

Tutorials Track

(Aula) |

Workshops Track

(Aula) |

Workshops Track

(Aula) |

T3 (full day):

Microarchitecture: Concepts, Tradeoffs, the Future

Instructor: Yale Patt (University of Texas, Austin)

|

T4 (morning):

Does Multicore Change the Way We Should Design Caches?

A Tutorial for Architects Interested the Nuts-and-Bolts of Future Cache Technologies

Instructor:

Hillery Hunter (IBM TJ Watson Research Center)

|

T5 (morning):

Building High Performance Threaded Applications using Libraries

or Why you don't need a parallel compiler.

Instructor:

Jim Cownie (Intel)

T7 (afternoon):

Transactional Programming in a Multi-core Environment

Instructors:

Ali-Reza Adl-Tabatabai (Intel),

Bratin Saha (Intel)

|

W4 (full day):

GREPS: GCC for Research in Embedded and Parallel Systems

Organizers:

Albert Cohen (INRIA, France),

Ayal Zaks (IBM Haifa Research Lab)

|

W5 (full day):

MEDEA: MEmory performance: DEaling with Applications, systems and architecture

Organizers:

Roberto Giorgi (University of Siena, Italy),

Cosimo Antonio Prete (University of Pisa, Italy),

Pierfrancesco Foglia (University of Pisa, Italy),

Sandro Bartolini (University of Siena, Italy)

|

The tutorial will be organized into 4 sessions of approximately

90 minutes each.

The two morning sessions will focus on C++98, with the aim being to sharpen

and modernize C++ skills.

The two afternoon sessions will focus on C++0x, with the aim being

to present an overview of the upcoming standard.

C++98 - the aim of this half day is to sharpen and modernize C++ skills

Session 1: Speaking C++ like a native.

Multi-paradigm programming is programming applying different styles of programming,

such as object-oriented programming and generic programming, where they are most

appropriate. This talk presents simple example of individual styles in ISO Standard C++

and examples where these styles are used in combination to produce cleaner, more

maintainable code than could have been done using a single style only.

Session 2: C++ in Safety-Critical Systems.

C++ is widely used in embedded systems programming and even in safety-critical and

hard-real-time systems. This presentation discusses how to write code in these highly

demanding application areas. First, the mapping of C++ code to hardware resources is

reviewed and the basics abstraction mechanisms (classes and templates) are reviewed

from the perspective of this kind of code. Then, the JSF++ coding rules are examined as

an example of a set of domain specific rules. These rules have been and are being used

for the development of millions of lines of C++. Questions addressed include: "Can I use

templates in safety-critical code?" (Yes: You can and must.), and "Can I use exceptions

in hard-real time code?" (Sadly no, not with the current level of tool support.).

Predictability of language features and minimization of programmer mistakes are key

notions.

C++0x - the aim of this half day is to present an overview of the upcoming standard

Session 3: C++0x: an overview.

A good programming language is far more than a simple collection of features. My ideal

is to provide a set of facilities that smoothly work together to support design and

programming styles of a generality beyond my imagination. Here, I briefly outline rules

of thumb (guidelines, principles) that are being applied in the design of C++0x. Then, I

present the state of the standards process (we are aiming for C++09) and give examples

of a few of the proposals being considered in the ISO C++ standards committee. I'll

focus on general principles supported by minor examples. Features presented will include

general initializer lists, generalized constant expression, template aliases, and many

minor additions.

Session 4: C++0x: Concepts.

"Concepts" is C++0x's type system for C++ types, combinations of type, and

combinations of types of integers. They are introduce to allow programmers to directly

express a template's requirements on its template arguments and thereby a template's

requirements on its users. The result is greater expressiveness, better error handling,

without loss of performance or flexibility. Concepts is the major new language feature of

C++0x and will be central to the future development of generic programming.

Each session is timed to allow plenty of time for questions and answers.

This tutorial presents CellSim, a source-level compatible

modular simulator of the Cell processor architecture built on the

UNISIM platform. The modular nature of the simulator makes it very

flexible, and is targeted at wide exploration of heterogeneous chip

multiprocessors.

The first part of the tutorial will cover installation of

the simulator, generation of code for the simulator, and the actual

simulation of code.

The second part will deal with modification of the simulator to

explore changes in the architecture. Finally, we will cover joint

modifications to the simulator and the gcc compiler to explore ISA

extensions.

Process technology continues to deliver enormous capability on the

individual chip. In ten years, we expect 100 billion transistors

and a 10 GHz clock. The microarchitect's job: Harness that technology.

To date, we have done okay, but not good enough. Too many transistors

wasted in multiple cores that do not contribute or very large caches that

do not pay for themselves. Too much energy wasted. I believe there is

a better way, if we return to the fundamentals. I also believe that

the "better way" is equally valid for the general purpose desk top as

it is for the embedded processor. In this tutorial, my plan is to first

examine the fundamental principles that impact microprocessor performance

in the future environment of 100 billion transistor chips running at

10 GHz. From there, I plan to discuss current and planned mechanisms for

exploiting chip resources. Finally, I plan to describe what I believe

will characterize the microarchitecture of microprocessors of the year

2017. Those are my plans. But, if previous tutorials are any predictor

of future tutorials, the plan will be interrupted many times by questions

from the attendees and digressions from the instructor. As to questions

from the attendees, they are welcome at any time. As to digressions by

the instructor, they tend to enrich the lectures. Besides, there doesn't

seem to be any way to keep him from doing it.

- Part 1: Overview: the fundamentals, the challenges, and the tradeoffs.

Why so many cores? What are the main constraints to performance?

- Part 2: Mechanisms, compile time, run time, and both. For example,

branch prediction, predication, trace cache, block strutured

ISA, transactional memory.

- Part 3: The latest chips (Pentium M, Cell, Barcelona.) and what

we can infer from them.

- Part 4: The microarchitecture of the year 2017.

As technology moves forward, innovative advancements will rely on

researchers at the architecture, compiler, and software levels becoming

familiar with the bottlenecks of silicon scaling. Two trends are of

particular note: (1) In many microprocessor families, increasing amounts

of silicon area are being devoted to caches, and (2) Technology

variability is causing stability concerns for our fundamental cache

building block - six-transistor SRAM. For each of these concerns,

there are aggravating factors present in many proposed multicore designs:

(1) Where die size is kept relatively equal to that of prior generations,

inclusion of multiple cores, even if lighter-weight, often leaves

less silicon area available for caches;

(2) Many proposals suggest complex multi-voltage management schemes, to

deal with power consumption, thus aggravating and complicating

circuit-level challenges in the face of increasing transistor-level variability.

In light of these factors, this tutorial will examine the fundamentals of

cache design, and alternatives to six-transistor SRAM.

The tutorial will take a nuts-and-bolts approach, explaining how bits are

stored in cache cells, which are composed into arrays, and then joined

with logic and management to form the caches we simulate at the

architectural level. Discussion will center on circuit and technology

properties which correlate to metrics commonly understood at the

architecture level: cache capacity, cache access latency, and cache

distance from the CPU.

Multi-threaded programming has been used in the software industry to

improve the responsiveness of GUI applications, to improve the throughput

of network based applications, and to reduce the turnaround time of

compute bound applications through the use of parallelism. With the

current trend to multi-core chips the use of multi-threading to exploit

the performance of even laptop hardware is becoming ever more important.

To help the software industry to expand its use of multithreading, Intel

has been pushing the development of tools, languages and libraries which

make it easier to write multi-threaded code. Most current threaded

programs use either direct calls to an underlying thread library (pthreads

or winthreads), or language extensions such as OpenMP*. Intel(r) Threading

Building Blocks (Intel(r) TBB) is a C++ template library that provides an

interesting alternative to these two approaches that has many benefits.

This tutorial will introduce the library approach, and compare it with

both OpenMP and native threads, explaining how code implemented with the

Intel(r) TBB library achieves efficiency without requiring parallel language

extensions in the compiler.

Since July 2007 Intel(R) TBB has been available under the GPL, so this

tutorial should be of interest to programmers on all platforms.

Registered attendees at this tutorial will receive a free copy of

the O'Reilly book

Intel Threading Building Blocks, which has a

list price of $34.99.

Tutorial T6 has been cancelled.

Tutorial T6:

Design Challenges of the Development Tools to Exploit Parallelism

Offered by Embedded Multicore Systems

Instructors:

Viet Ngo (Freescale Semiconductor Inc.),

Simona-Sorina Costinescu (Freescale Semiconductor, Romania),

Vladimir Cambrea (Freescale Semiconductor, Romania),

Adrian Gancev (Freescale Semiconductor, Romania),

Mihail Nistor (Freescale Semiconductor, Romania)

Half Day (Afternoon), Sunday, September 16, 2007

Multicore solutions hybrid or homogeneous are widely used for embedded

systems in symmetric and asymmetric programming models. Tools for

debugging concurrent applications on these environments are facing the

variety of targets and OS combinations and the need of keeping shared

resources synchronized. The DSP world imposes additional challenges on

the applications to run faster, in a restricted memory space and power

consumption.

The tutorial presents hardware techniques exemplified on Linux kernel for

Freescale dual core Power Architecture MPC8641D, techniques to speed up

various DSP algorithms, the industry approach implemented in the Starcore

compiler for two homogenous multicore DSP platforms, and explores

systematic modeling frameworks for multicore support.

With single thread performance starting to plateau, HW architects have

turned to chip level multiprocessing (CMP) to increase processing power.

All major microprocessor companies are aggressively shipping multi-core

products in the mainstream computing market. Moore's law will largely be

used to increase HW thread-level parallelism through higher core counts

in a CMP environment. CMPs bring new challenges into the design of the

software system stack.

In this tutorial, we will talk about the shift to multi-core

processors, and the programming implications. In particular, we will

focus on transactional programming. Transactions have emerged as a

promising alternative to lock-based synchronization that eliminates many

of the problems associated with lock-based synchronization. For example,

transactions eliminate deadlock, allow read sharing, provide

fine-grained concurrency, and enable safe composition of atomic

primitives. We will discuss the design of hardware and software

transactional memory and quantify the tradeoffs between the different

design points. We will show how to extend the Java and C languages with

transactional constructs, and how to integrate transactions with

compiler optimizations and the language runtime (e.g., memory manager

and garbage collection).